Authentication has become an integral part of computer usage, but it still remains an interruptive step in people’s workflow. To authenticate to a computer, depending on the authentication method, users must exert mental effort (e.g., recall their password) and/or physical effort (e.g., type their password). These factors increase the cost of context switch for users – cost of switching attention from a primary task to the authentication step and back to the task – disrupting users’ workflow. Clinical staff have often told us they are frustrated by the need to repeatedly log into their clinical desktop computers – sometimes hundreds of times in a day.

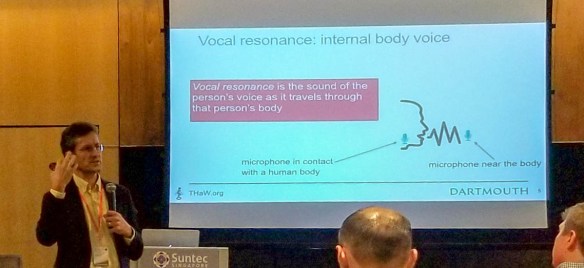

In this paper, presented by David Kotz at Ubicomp’18 in Singapore, we propose Seamless Authentication using Wristbands (SAW). SAW is an authentication method designed to address this shortcoming of proximity-based authentication methods, and we do so by adding a quick low-effort user input step that explicitly captures user intentionality for authentication. In SAW, the user’s wristband (e.g., fitness tracker, smartwatch) acts as the user’s authentication token. Read more below, and in the paper.

THaW welcomes Professor

THaW welcomes Professor