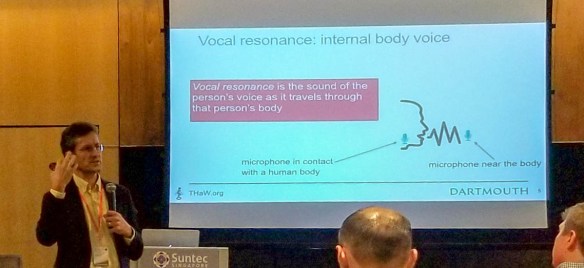

At the Joint Conference on Pervasive and Ubiquitous Computing conference, Ubicomp, David Kotz presented THaW’s work to develop a novel biometric approach to identifying and verifying who is wearing a device – an important consideration for a medical device that may be collecting diagnostic information that is fed into an electronic health record. Their novel approach is to use vocal resonance, i.e., the sound of your voice as it passes through bones and tissues, for a device to recognize its wearer and verify that it is physically in contact with the wearer… not just nearby. They implemented the method on a wearable-class computing device and showed high accuracy and low energy consumption.

Rui Liu, Cory Cornelius, Reza Rawassizadeh, Ron Peterson, and David Kotz. Vocal Resonance: Using Internal Body Voice for Wearable Authentication. Proceedings of the ACM on Interactive, Mobile, Wearable and Ubiquitous Technologies (IMWUT) (UbiComp), 2(1), March 2018. DOI 10.1145/3191751.

Abstract: We observe the advent of body-area networks of pervasive wearable devices, whether for health monitoring, personal assistance, entertainment, or home automation. For many devices, it is critical to identify the wearer, allowing sensor data to be properly labeled or personalized behavior to be properly achieved. In this paper we propose the use of vocal resonance, that is, the sound of the person’s voice as it travels through the person’s body – a method we anticipate would be suitable for devices worn on the head, neck, or chest. In this regard, we go well beyond the simple challenge of speaker recognition: we want to know who is wearing the device. We explore two machine-learning approaches that analyze voice samples from a small throat-mounted microphone and allow the device to determine whether (a) the speaker is indeed the expected person, and (b) the microphone-enabled device is physically on the speaker’s body. We collected data from 29 subjects, demonstrate the feasibility of a prototype, and show that our DNN method achieved balanced accuracy 0.914 for identification and 0.961 for verification by using an LSTM-based deep-learning model, while our efficient GMM method achieved balanced accuracy 0.875 for identification and 0.942 for verification.